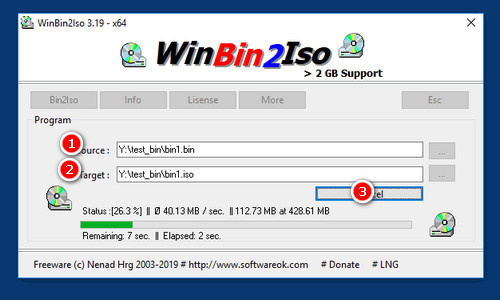

Subsequent most_similar_approx search (n=10, effort=1.0) (different key than first query) Warm subsequent most_similar search (n=10) (same key as first query)įirst most_similar_approx search query (n=10, effort=1.0) (worst case)įirst most_similar_approx search query (n=10, effort=1.0) (average case) (w/ disk persistent cache) Subsequent most_similar search (n=10) (different key than first query) Warm multiple key query (n=25) (same keys as cold query)įirst most_similar search query (n=10) (worst case)įirst most_similar search query (n=10) (average case) (w/ disk persistent cache) Warm single key query (same key as cold query) Moreover, memory maps are cached between runs so even after closing a process, speed improvements are reaped. It uses indexes for fast key lookups as well as uses memory mapping, SIMD instructions, and spatial indexing for fast similarity search in the vector space off-disk with good memory performance even between multiple processes. It uses SQLite, a fast, popular embedded database, as its underlying data store. It also is intended to work with large vector models that may not fit in memory. magnitude) for vector embeddings is intended to be a more efficient universal vector embedding format that allows for lazy-loading for faster cold starts in development, LRU memory caching for performance in production, multiple key queries, direct featurization to the inputs for a neural network, performant similiarity calculations, and other nice to have features for edge cases like handling out-of-vocabulary keys or misspelled keys and concatenating multiple vector models together. A fast, lightweight tool to consume these large vector space embedding models efficiently is lacking. Vector space embedding models have become increasingly common in machine learning and traditionally have been popular for natural language processing applications. You can install this package with pip: pip install pymagnitude # Python 2.7 Additional Featurization (Parts of Speech, etc.).Pre-converted Magnitude Formats of Popular Embeddings Models.Published in our paper at EMNLP 2018 and available on arXiv. It offers unique features like out-of-vocabulary lookups and streaming of large models over HTTP. It is primarily intended to be a simpler / faster alternative to Gensim, but can be used as a generic key-vector store for domains outside NLP. Magnitude: a fast, simple vector embedding utility libraryĪ feature-packed Python package and vector storage file format for utilizing vector embeddings in machine learning models in a fast, efficient, and simple manner developed by Plasticity.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed